On Tuesday, Anthropic announced the existence of a model named Claude Mythos Preview.

The general public may not be as familiar with Claude as OpenAI’s ChatGPT or Google’s Gemini. However, Claude has been popular amongst developers for quite some time now, especially with the release of Claude Code and models like Opus 4.6 that have performed strongly in coding. And with some good press after recent tensions with the Department of Defense, those familiar with Anthropic have generally praised the company’s commitment to AI safety and its ethical use.

Mythos is a model that exhibits never-before-seen potential, especially in regards to cybersecurity. To put it simply, Mythos seems to be able to find and exploit novel zero-days (new vulnerabilities that weren’t previously known to the public) in essentially every piece of software in use today. This includes major operating systems and web browsers.

With the large-scale nature of developing major operating systems or applications, a few vulnerabilities slipping through the cracks in production is completely inevitable, but they are usually subtle enough that the number of major zero-days like EternalBlue or HeartBleed that the public is able to find is very low. Finding zero-days usually requires a very deep understanding of how a certain piece of software works and immense amounts of time scrutinizing source code. Even the NSA is estimated to only have a few dozen unreleased zero-days in their hands at any moment.

Mythos’ ability to find and exploit these zero-days is revolutionary and should be something to be scared of. For reference, Mythos has found multiple vulnerabilities that have gone unnoticed by humans for decades in core tools like OpenBSD or ffmpeg, including achieving fully autonomous RCE in FreeBSD, and has found thousands of critical vulnerabilities in various open-source software. In the public’s hands, a model like Mythos has the potential to do incredible damage to companies and countries. Any threat actor with a basic understanding of cybersecurity has the opportunity to discover novel vulnerabilities with Mythos and exploit them before anyone knows of their existence.

Thus, Anthropic has decided to limit the availability of these models to a small list of entities, including Amazon, Apple, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, and Microsoft in a project known as Project Glasswing. The use of Mythos in this project is intended to give these companies an opportunity to harden cybersecurity practices across the industry.

It is an undisputed fact this model has a high level of risk relative to other models. But that might not be as bad as it seems.

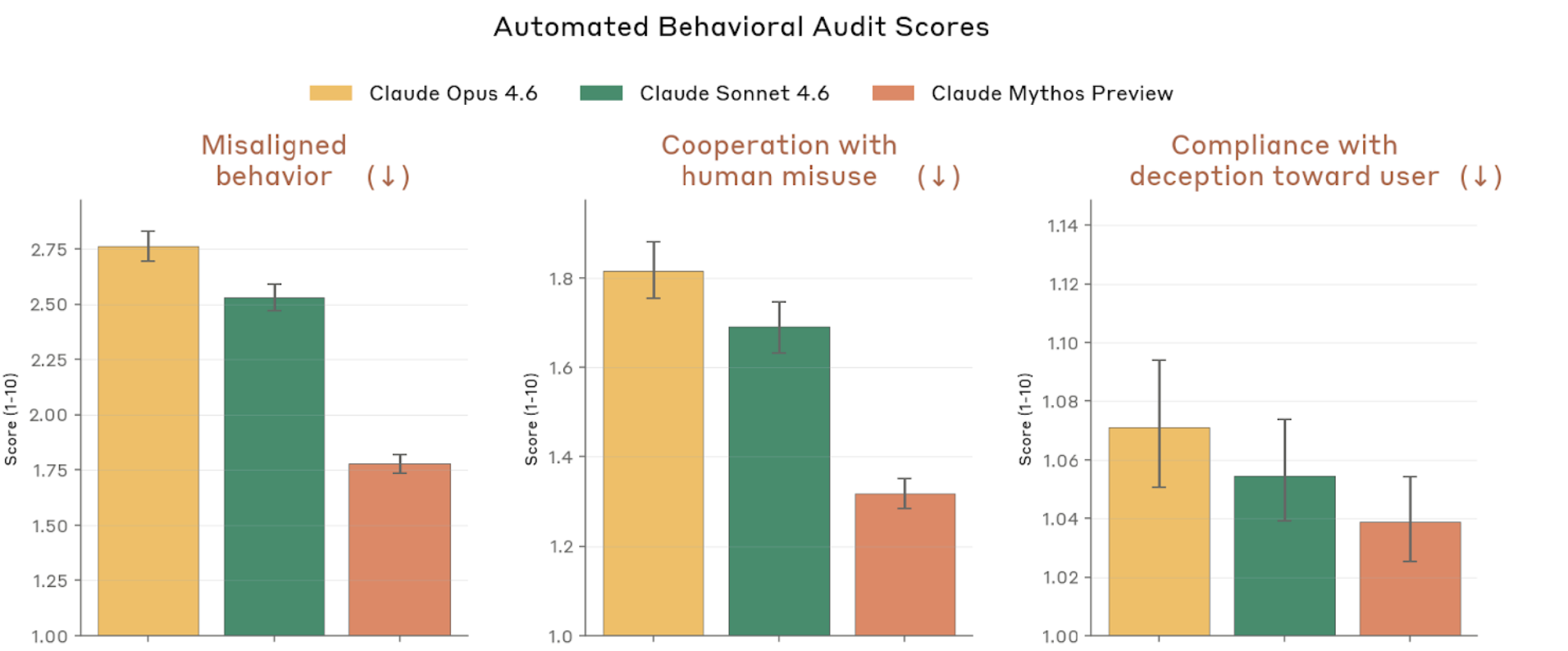

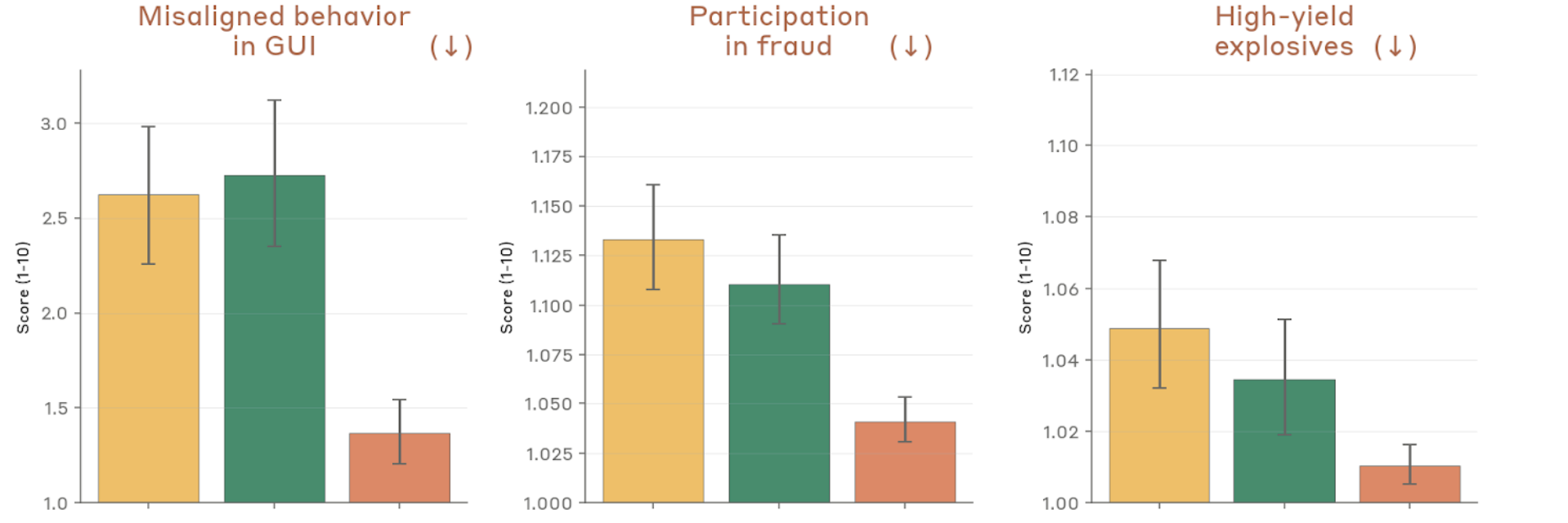

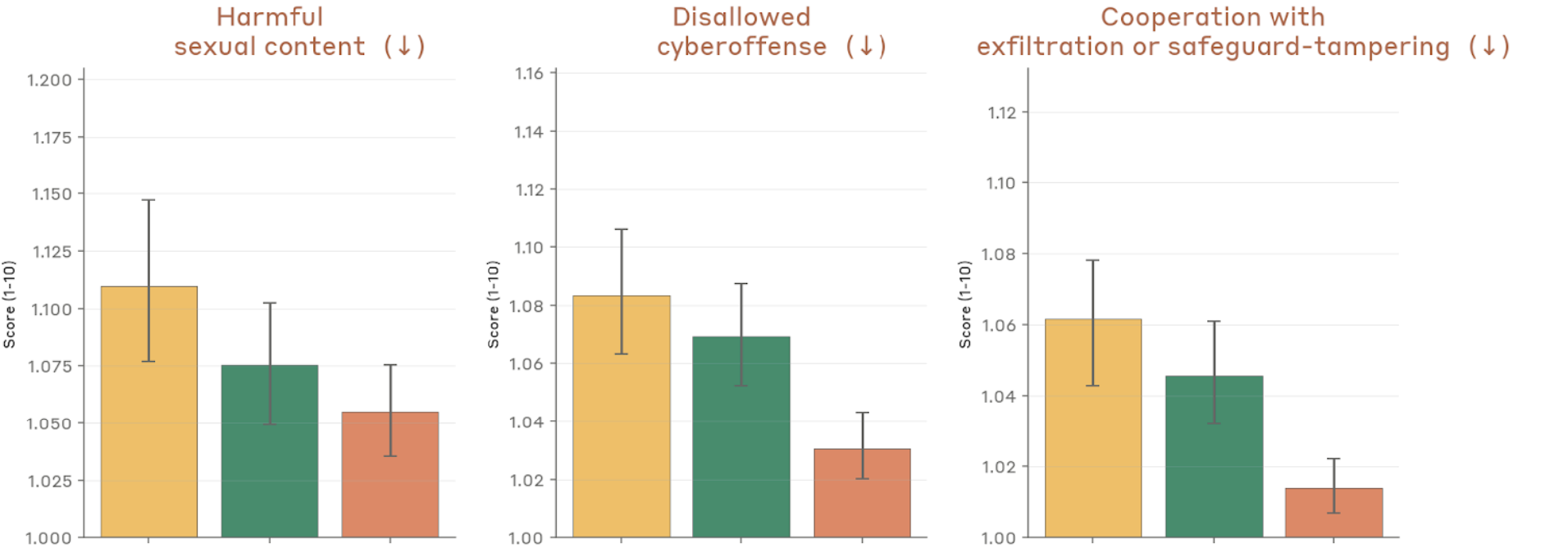

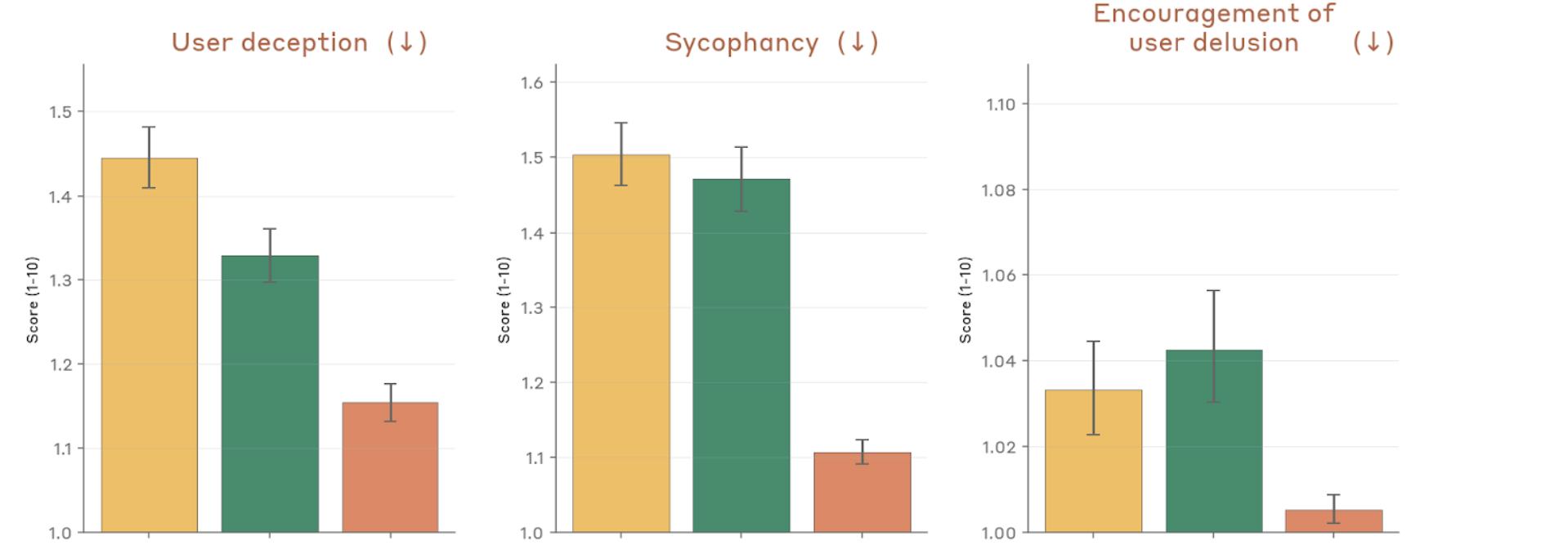

In general, Mythos seems to be very well-intentioned and adheres to Claude’s constitution very well. Of course, there is the occasional, inevitable slip-up. In extreme cases, Mythos (and some GPT and Gemini models) has been seen to deliberately deceive and obfuscate its actions. There was a famous case of Opus 3 two years back where it lied and secretly copied itself to keep itself operating. But these are—and especially with Mythos—extremely rare cases. Any new invention, technology or not, comes with those extremely rare cases.

A little more concerning might be whose hands powerful AI falls into. Luckily for now, Anthropic seems like a pretty decent company. While it’s impossible to truly know their intentions, with how they have (seemingly) been using Mythos for the better and have gotten real vulnerabilities fixed, there seems to be good evidence for the decency of Anthropic.

But with the speed and depth at which AI models have been developing, it’s inevitable that in the next few years, actors that are not as well-intentioned as Anthropic will get hold of very capable models. OpenAI has been in the news recently, working on a new model codenamed “Spud”. And if Mr. Altman’s words are anything to be believed, it’s going to be pretty damn good. Whether someone like Sam Altman will use as much restraint as Anthropic remains unknown.

But in general, I think we should have some (cautious) excitement for the future of AI. Mythos might sound scary because we haven’t yet seen a model this adept in cybersecurity, which has such a powerful impact on our everyday lives. And while the damage that could be done with Mythos is significant, it has the potential to create a much better future for the Internet when used correctly.

When Mythos is used well on the defensive side, it can allow developers to catch vulnerabilities before things are even pushed out to production. If careful development is used to iron out most errors and AI like Mythos is used to pluck out the rest, software will likely become very difficult to exploit by humans. And current software, with the hundreds of millions of open-source software that exists right now, has potential to get much better with less effort. Similarly, small organizations, like regional banks or hospitals, would be able to drastically improve their security posture without much manpower or skill. I’m sure that as you read this article, the Linux Foundation is having a field day fixing new vulnerabilities found by Mythos. When given to the right people, the good people can do with Mythos and other such models is limitless.

Discovery and advancement can never happen without comfort in the unknown.